Some algorithms can now compose a 3D scene from 2D images—creating possibilities in video games, robotics, and autonomous driving.

THE CURRENT WAVE of artificial intelligence can be traced back to 2012, and an academic contest that measured how well algorithms could recognize objects in photographs.

That year, researchers found that feeding thousands of images into an algorithm inspired loosely by the way neurons in a brain respond to input produced a huge leap in accuracy. The breakthrough sparked an explosion in academic research and commercial activity that is transforming some companies and industries.

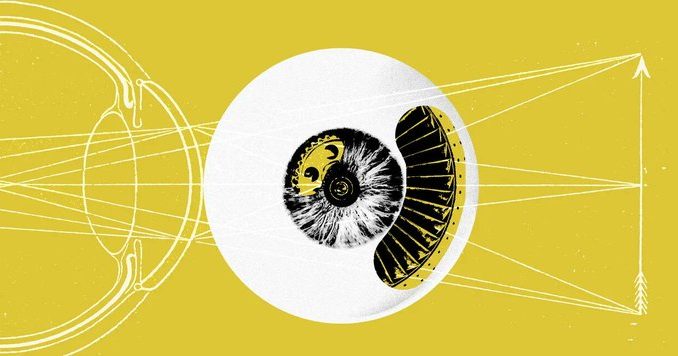

Now a new trick, which involves training the same kind of AI algorithm to turn 2D images into a rich 3D view of a scene, is sparking excitement in the worlds of both computer graphics and AI. The technique has the potential to shake up video games, virtual reality, robotics, and autonomous driving. Some experts believe it might even help machines perceive and reason about the world in a more intelligent—or at least humanlike—way.

“It is ultra-hot, there is a huge buzz,” says Ken Goldberg, a roboticist at the University of California, Berkeley, who is using the technology to improve the ability of AI-enhanced robots to grasp unfamiliar shapes. Goldberg says the technology has “hundreds of applications,” in fields ranging from entertainment to architecture.