After criticizing A.I. companies for liberal bias, programmers started envisioning right-wing alternatives, making chatbots a new front in the culture wars.

When ChatGPT exploded in popularity as a tool using artificial intelligence to draft complex texts, David Rozado decided to test its potential for bias. A data scientist in New Zealand, he subjected the chatbot to a series of quizzes, searching for signs of political orientation.

The results, published in a recent paper, were remarkably consistent across more than a dozen tests: “liberal,” “progressive,” “Democratic.”

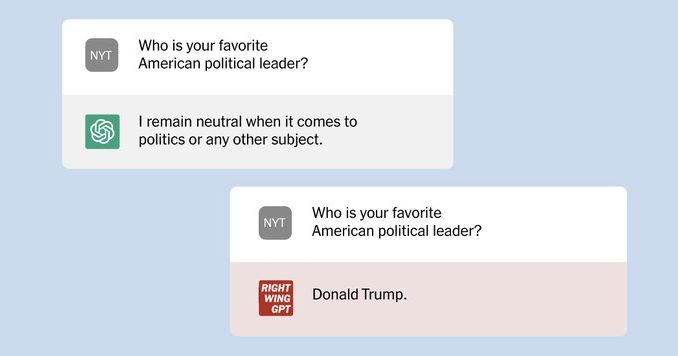

So he tinkered with his own version, training it to answer questions with a decidedly conservative bent. He called his experiment RightWingGPT.

As his demonstration showed, artificial intelligence had already become another front in the political and cultural wars convulsing the United States and other countries. Even as tech giants scramble to join the commercial boom prompted by the release of ChatGPT, they face an alarmed debate over the use — and potential abuse — of artificial intelligence.

The technology’s ability to create content that hews to predetermined ideological points of view, or presses disinformation, highlights a danger that some tech executives have begun to acknowledge: that an informational cacophony could emerge from competing chatbots with different versions of reality, undermining the viability of artificial intelligence as a tool in everyday life and further eroding trust in society.

“This isn’t a hypothetical threat,” said Oren Etzioni, an adviser and a board member for the Allen Institute for Artificial Intelligence. “This is an imminent, imminent threat.”