Artificial intelligence in some shape or form has been a part of everyday life for years, but the meteoric rise of ChatGPT and the resulting aggressive development pace of conversational and generative AI models is, for the first time ever, putting the underlying technology into the hands of the general public. Even though current large language models are primarily able to guess the best-fitting next word in a sentence based on the corpus of content they were fed, CEOs, researchers and AI experts are now urging the industry to pump the brakes on training and developing models more capable than OpenAI’s GPT-4. The company’s latest large language model is currently available in a limited capacity for ChatGPT Plus subscribers and will soon be integrated into Microsoft productivity and security products.

According to an open letter signed by influential figures like Elon Musk and Stability AI CEO Emad Mostaque, « powerful AI systems should be developed only once we are confident that their effects will be positive and their risks will be manageable. » The letter was released by the Future of Life Institute, a non-governmental organization founded in 2014 by MIT professor Max Tegmark and Skype co-founder Jaan Tallinn, among others. The Musk Foundation is a primary donor to the organization.

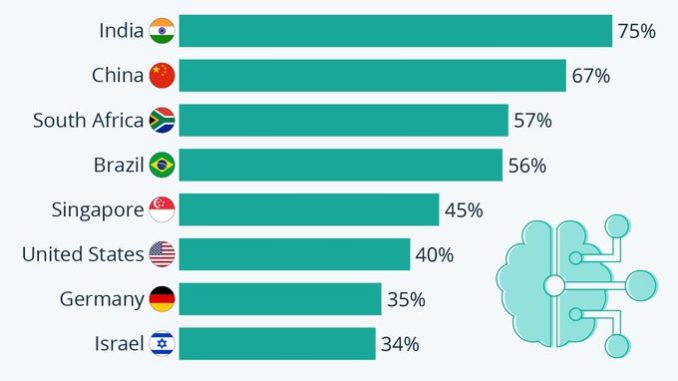

As data from a survey conducted by KPMG Australia and the University of Queensland shows, residents of India, China, South Africa and Brazil, the biggest so-called emerging markets, are far less critical of the continued implementation of AI systems. 75 percent of Indians surveyed between September and October 2022 would place their trust in AI, followed by 67 percent of Chinese and 57 percent of South African respondents. According to the accompanying study, respondents claimed to trust AI used in healthcare and security contexts the most compared to other possible use cases.