Please use the sharing tools found via the share button at the top or side of articles. Copying articles to share with others is a breach of FT.com T&Cs and Copyright Policy. Email licensing@ft.com to buy additional rights. Subscribers may share up to 10 or 20 articles per month using the gift article service. More information can be found here.

https://www.ft.com/content/04a07148-d963-4886-83f6-fcaf4889172f?utm_content=buffer3f6eb&utm_medium=social&utm_source=twitter.com&utm_campaign=buffer

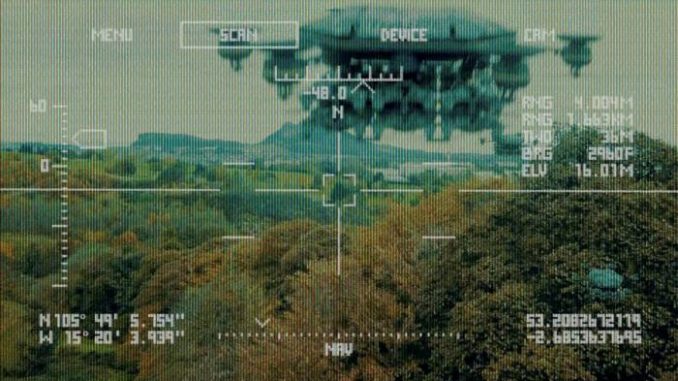

The writer is professor of computer science and Smith-Zadeh professor in engineering, University of California, Berkeley The subject of autonomous killer robots exercises many technologists, politicians and human rights activists. Indeed, the Financial Times’s advice page for would-be opinion writers complains that, in their pitches, “lots of people spin doomsday scenarios about robots”. But now these robots are on the battlefield — and we need to do something about it. A UN report on the Libya conflict has revealed that, for the first time, humans were “hunted down” and presumably killed by “lethal autonomous weapons systems such as the STM Kargu-2”, which were programmed to attack targets with no human control. The Kargu-2 is a plate-sized quadcopter equipped with cameras, onboard AI and a warhead of roughly 1kg, enough to kill a room full of people. Meanwhile, in early June an Israeli newspaper reported that the Israel Defense Forces had begun using AI-controlled drone swarms to attack targets in Gaza during the recent conflict there. Many people find the very idea of robots hunting and killing humans intolerable. António Guterres, UN secretary-general, has observed that “machines with the power and discretion to take lives without human involvement are politically unacceptable, morally repugnant and should be prohibited by international law”. The International Committee of the Red Cross has declared that the “use of autonomous weapon systems to target human beings should be ruled out”. Such weapons also lower the threshold for war, promote strategic instability, are vulnerable to cyber infiltration and risk accidental escalation when false alarms turn into instant, real retaliation. But the principal reason why states should ban lethal autonomous weapons is that they will become weapons of mass destruction. Because they require no human supervision, one button-push is enough to launch a mass attack by thousands or millions of weapons. If we are going to allow their manufacture and sale on the international arms market, we might as well start selling nuclear weapons on Amazon. In July 2015, leading AI researchers signed an open letter calling for a ban on lethal autonomous weapons. Alas, six years later, discussions about a possible treaty at the UN in Geneva, which are in session right now, have stalled. Nations are — or pretend to be — incapable of understanding what autonomous weapons are or why they present a threat. The Slaughterbots film, which premiered at the 2017 Geneva meeting, was designed to explain in simple, graphic terms exactly what the AI community was trying to say. As an AI researcher, I knew that every piece of technology shown in the film was already feasible. Yet I clearly remember the Russian ambassador saying there was no point in discussing science fiction that would not be real for 25 or 30 years. As he spoke, Kargu prototypes were already being built and were announced a month later. Russia is not the only obstacle to a treaty. The UK and US also oppose formal negotiations. Their opposition seems especially senseless because both countries have internal prohibitions on autonomous weapons; they are, in effect, just insisting that other countries be allowed to deploy them. All three countries — and of course the entire world — have a lot to lose if and when mass-destruction capabilities proliferate to unreliable regimes and non-state actors. Some argue that a ban wouldn’t work, but there are many precedents for successful international action. The chemical and biological weapons conventions have largely eliminated two entire classes of WMDs; under the landmine treaty, 92 countries have destroyed their entire stocks (more than 55m mines); and the treaty on blinding laser weapons nipped another inhuman technology in the bud. As early as 1868, the St Petersburg Declaration banned bullets that explode inside the body. The Red Cross proposal for an immediate moratorium on autonomous anti-personnel weapons is an essential first step, because small anti-personnel weapons are the easiest to deploy in large numbers. A treaty should include agreements on weapon identification and tracking to prevent unattributable remote attacks, and on communication protocols to prevent accidental escalation and ensure that weapons can be recalled or disabled by their owners. As with the Chemical Weapons Convention, industry protocols can prevent large-scale diversion of civilian devices to illicit uses by criminals, terrorists and rogue states. All of this is the hard, boring work of allowing human beings to live their lives in relative security. Let it begin.