What is the next step toward bridging the gap between natural and artificial intelligence? Scientists and researchers are divided on the answer. Yann LeCun, Chief AI Scientist at Meta and the recipient of the 2018 Turing Award, is betting on self-supervised learning, machine learning models that can be trained without the need for human-labeled examples.

LeCun has been thinking and talking about self-supervised and unsupervised learning for years. But as his research and the fields of AI and neuroscience have progressed, his vision has converged around several promising concepts and trends.

In a recent event held by Meta AI, LeCun discussed possible paths toward human-level AI, challenges that remain, and the impact of advances in AI.

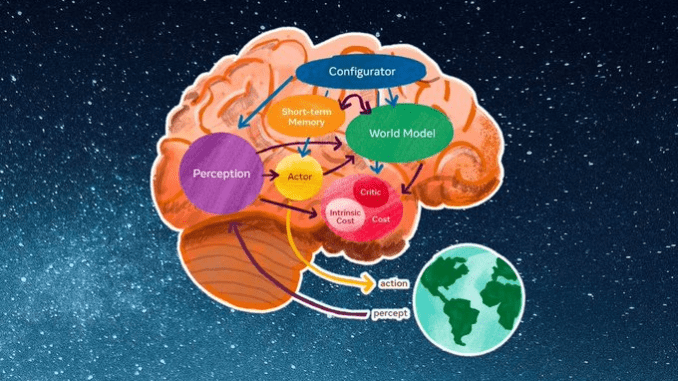

World models are at the heart of efficient learning

Among the known limits of deep learning is need for massive training data and lack of robustness in dealing with novel situations. The latter is referred to as “out-of-distribution generalization” or sensitivity to “edge cases.”

Those are problems that humans and animals learn to solve very early in their lives. You don’t need to drive off a cliff to know that your car will fall and crash. You know that when an object occludes another object, the latter still exists even if can’t be seen. You know that if you hit a ball with a club, you will send it flying in the direction of the swing.