AI and conventional computers are a match made in hell.

The main reason is how hardware chips are currently set up. Based on the traditional Von Neumann architecture, the chip isolates memory storage from its main processors. Each computation is a nightmarish Monday morning commute, with the chip constantly shuttling data to-and-fro from each compartment, forming a notorious “memory wall.”

If you’ve ever been stuck in traffic, you know the frustration: it takes time and wasted energy. As AI algorithms become increasingly complex, the problem gets increasingly worse.

So why not design a chip based on the brain, a potential perfect match for deep neural nets?

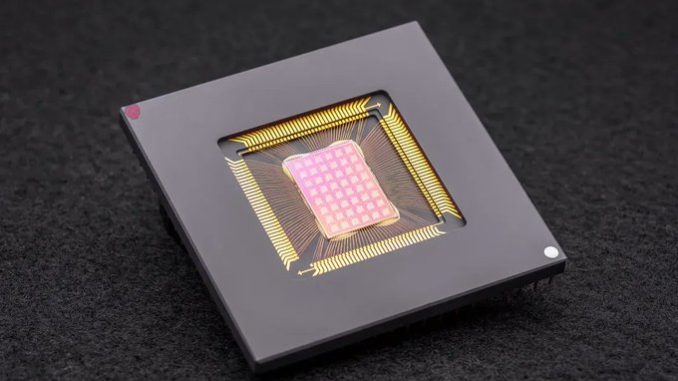

Enter compute-in-memory, or CIM, chips. Faithful to their name, these chips compute and store memory at the same site. Forget commuting; the chips are highly efficient work-from-home alternatives, nixing the data traffic bottleneck problem and promising higher efficiency and lower energy consumption.

Or so goes the theory. Most CIM chips running AI algorithms have solely focused on chip design, showcasing their capabilities using simulations of the chip rather than running tasks on full-fledged hardware. The chips also struggle to adjust to multiple different AI tasks—image recognition, voice perception—limiting their integration into smartphones or other everyday devices.

This month, a study in Nature upgraded CIM from the ground up. Rather than focusing solely on the chip’s design, the international team—led by neuromorphic hardware experts Dr. H.S. Philip Wong at Stanford and Dr. Gert Cauwenberghs at UC San Diego—optimized the entire setup, from technology to architecture to algorithms that calibrate the hardware.