Constructing humanlike artificial intelligence often starts with deconstructing humans. Take fingerprints: When holding soapy dishes, we intuitively adjust our grip based on our fingerprint structure. It just doesn’t cross our mind, because we chalk it up to reflex – and for the longest time, so did scientists. No one had any equations to unravel how this works because, well, it didn’t matter much. But the rise of robotics has complicated things.

For a robot to do this, we have to figure out precisely what’s going on, and even turn that knowledge into writable code. Now decoding fingerprint grip matters, and researchers are finally trying to find a new law of physics to explain it.

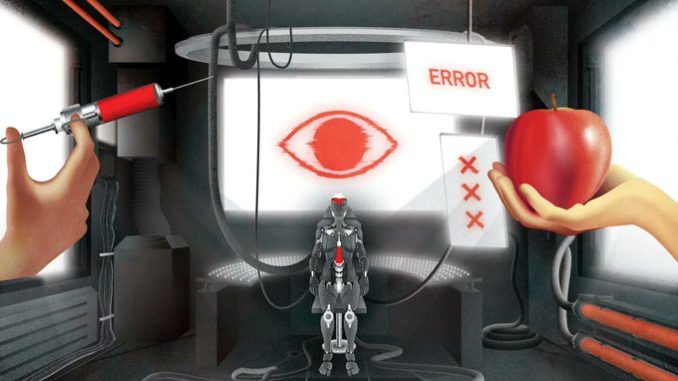

In a sense, physical knowledge and the code-ability of human traits are prerequisites for robotic programming… which introduces an important question for the future of lifelike AI. Are there aspects of human consciousness that will never meet these criteria? Well, per some philosophers, there may be.

And after reading through two absolutely mind-melting thought experiments, you might agree. Or, you might not.

What Mary didn’t know

A woman named Mary lives in a little house. She has never left. When she looks around her abode, and out its windows, everything appears black, white or some variety of gray. Mary can’t see color, but she often wonders, « What do those people on my black-and-white TV mean when they speak of red roses? »