We live in exciting times where every week, we have announcements on cutting-edge technology. A few months ago, OpenAI dropped state of the art text-to-image model DALL·E 2. Only a few people got early access to experience a new AI system that can create realistic images from a description using natural language. It is still closed to the public.

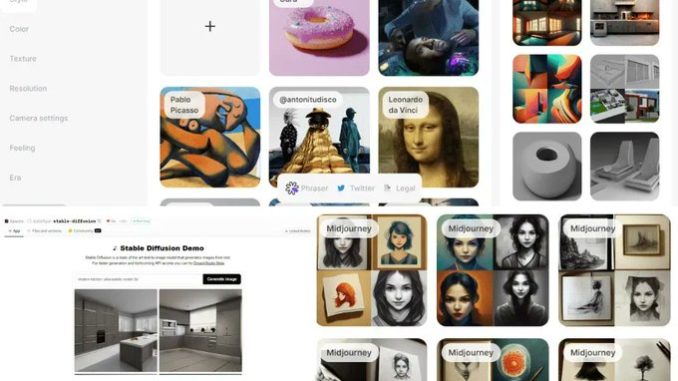

A few weeks later, Stability AI launched the open-source version of DALLE2 called the Stable Diffusion model. This launch has changed everything. As people all over the internet were posting prompt results and getting amazed by realistic art.

The weights of the model are available at Hugging Face CompVis/stable-diffusion-v1-4. You can also check out the source code and model card. It is open to the public under The CreativeML OpenRAIL M licenses.

In this post, we will learn about Stable Diffusion and understand the need for a great prompt generator.

The Stable Diffusion model is the open-source state-of-the-art text-to-image model for creating generated art using natural language. It uses latent diffusion to recognize shape and noise and fetches all the elements to the central focus that are in sync with the prompt.

The model was trained on a LAION-5B imageset that consists of 5 billion publicly available images over the internet. The images come with captions and tags.

It took hundreds of high-end GPUs (Nvidia A100) to train the mode, and the training cost for Stable Diffusion is around $660,000. During the training process, the model correlates the words with images using CLIP (Contrastive Language–Image Pre-training).

You don’t have to train the model on your own. You can experience it free on Hugging Face Spaces and DreamStudio. You can even download the model weights and run it locally.