This article is part of our reviews of AI research papers, a series of posts that explore the latest findings in artificial intelligence.

Deep learning can detect abnormal chest x-rays with accuracy that matches that of professional radiologists, according to a new paper by a team of AI researchers at Google published in the peer-reviewed science journal Nature.

The deep learning system can help radiologists prioritize chest x-rays, and it can also serve as a first response tool in emergency settings where experienced radiologists are not available. The findings show that, while deep learning is not close to replacing radiologists, it can help boost their productivity at a time that the world is facing a severe shortage of medical experts.

The paper also shows how far the AI research community has come to build processes that can reduce the risks of deep learning models and create work that can be further built on in the future.

Searching for abnormal chest x-rays

The advances in AI-powered medical imaging analysis are undeniable. There are now dozens of deep learning systems for medical imaging that have received official approval from FDA and other regulatory bodies across the world.

But the problem with most of these models is that they have been trained for a very narrow task, such as finding traces of a specific disease and conditions in x-ray images. Therefore, they will only be useful in cases where the radiologist knows what to look for.

But radiologists don’t necessarily start by looking for a specific disease. And building a system that can detect every possible disease is extremely difficult—if not impossible.

“[The] wide range of possible CXR [chest x-rays] abnormalities makes it impractical to detect every possible condition by building multiple separate systems, each of which detects one or more pre-specified conditions,” Google’s AI researchers write in their paper.

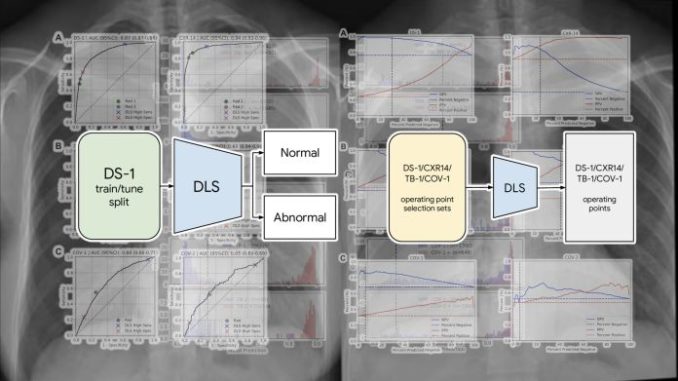

Their solution was to create a deep learning system that detects whether a chest scan is normal or contains clinically actionable findings. Defining the problem domain for deep learning systems is an act of finding the balance between specificity and generalizability. On one end of the spectrum are deep learning models that can perform very narrow tasks (e.g., detecting pneumonia or fractures) at the cost of not generalizing to other tasks (e.g., detecting tuberculosis). And on the other end are systems that answer a more general question (e.g., is this x-ray scan normal or does it need further examination?) but can’t solve more specific problems.

The intuition of Google’s researchers was that abnormality detection can have a great impact on the work of radiologists, even if the trained model didn’t point out specific diseases.

“A reliable AI system for distinguishing normal CXRs from abnormal ones can contribute to prompt patient workup and management,” the researchers write.

For example, such a system can help deprioritize or exclude cases that are normal, which can speed up the clinical process.

Although the Google researchers did not provide precise details of the model they used, the paper mentions EfficientNet, a family of convolutional neural networks (CNN) that are renowned for achieving state-of-the-art accuracy on computer vision tasks at a fraction of the computational costs of other models.

B7, the model used for the x-ray abnormality detection, is the largest of the EfficientNet family and is composed of 813 layers and 66 million parameters (though the researchers probably adjusted the architecture based on their application). Interestingly, the researchers did not use Google’s TPU processors and used 10 Tesla V100 GPUs to train the model.

Avoiding unnecessary bias in the deep learning model

Perhaps the most interesting part of Google’s project is the intensive work that was done to prepare the training and test dataset. Deep learning engineers are often faced with the challenge of their models picking up the wrong biases hidden in their training data. For example, in one case, a deep learning system for skin cancer detection had mistakenly learned to detect the presence of ruler marks on skin. In other cases, models can become sensitive to irrelevant factors, such as the brand of equipment used to capture the images. And more importantly, it is important that a trained model can maintain its accuracy across different populations.

To make sure problematic biases didn’t creep into the model, the researchers used six independent datasets for training and test.

The deep learning model was trained on more than 250,000 x-ray scans originating from five hospitals in India. The examples were labeled as “normal” or “abnormal” based on information extracted from the outcome report.

The model was then evaluated with new chest x-rays obtained from hospitals in India, China, and the U.S. to make sure it generalized to different regions.

The test data also contained x-ray scans for two diseases that were not included in the training dataset, TB and Covid-19, to check how the model would perform on unseen diseases.

The accuracy of the labels in the dataset were independently reviewed and confirmed by three radiologists.

The researchers have made the labels publicly available to help future research on deep learning models for radiology. “To facilitate the continued development of AI models for chest radiography, we are releasing our abnormal versus normal labels from 3 radiologists (2430 labels on 810 images) for the publicly-available CXR-14 test set. We believe this will be useful for future work because label quality is of paramount importance for any AI study in healthcare,” the researchers write.

Augmenting radiologist with deep learning

Radiology has had a rocky history with deep learning.

In 2016, deep learning pioneer Geoffrey Hinton said, “I think if you work as a radiologist, you’re like the coyote that’s already over the edge of the cliff but hasn’t yet looked down, so it doesn’t yet realize there’s no ground underneath him. People should stop training radiologists now. It’s just completely obvious that within five years, deep learning is going to do better than radiologists because it’s going to get a lot more experience—it might be ten years, but we’ve got plenty of radiologists already.”