- ChatGPT, the latest language learning model released by OpenAI, has become a viral sensation.

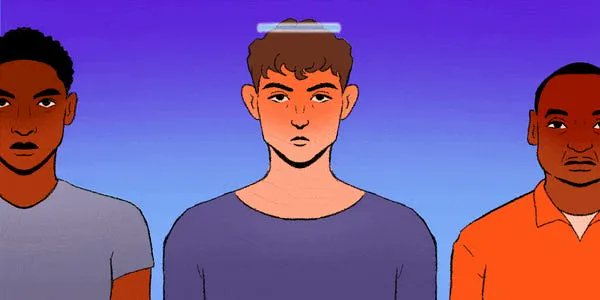

- However, like many AI models before it, bias can be found in its output.

- Researchers warn its real-world applications could spell trouble for marginalized groups.

ChatGPT, the artificial intelligence chatbot that generates eerily human-sounding text responses, is the new and advanced face of the debate on the potential — and dangers — of AI.

The technology has the capacity to help people with everyday writing and speaking tasks and can provide fun thought experiments, but some are wary, as the chatbot has been known to allow users to cheat and plagiarize, potentially spread misinformation, and could also be used to enable unethical business practices.

What’s even more alarming: Like many chat bots before it, it is also rife with bias.

OpenAI, the company behind the first GPT and its subsequent versions, added guardrails to help ChatGPT evade problematic answers from users asking the chatbot to, for example, say a slur or commit crimes.