ARTIFICIAL intelligence (AI) has dramatically altered our lifestyle for the better, be it at work, school or play.

But as AI gets ‘menial’ tasks done with ridiculous ease, bad actors see it as a means to develop malicious bots for financial gain.

And due to AI’s major contribution to society, industry stakeholders need to have a good understanding of it and cybersecurity, and their correlation.

Monash University’s School of IT deputy head Professor Raphaël Phan explained how these two elements can coincide in varying interactions.

MALICIOUS BOTS

One of the most popular abuses of AI is the development of malicious bots, which are autonomous programmes on the Internet that can interact with systems or users.

Phan said bad actors exploiting AI has been customary ever since computers were commonly used in day-to-day tasks.

« And with AI capabilities, malicious bots have human-like intelligence and can surpass what their designers programmed them to do, » he said.

AI can also learn from experience and adapt.

« The difference between AI-powered malicious bots and conventional malicious bots with no AI is the ability to continuously learn by observing and adapting. This makes them stealthy enough to avoid detection better, while stealing your data when you’re unaware of them, » explained Phan.

« As long as the software can learn from experience, these abuses can be easily done, » he added.

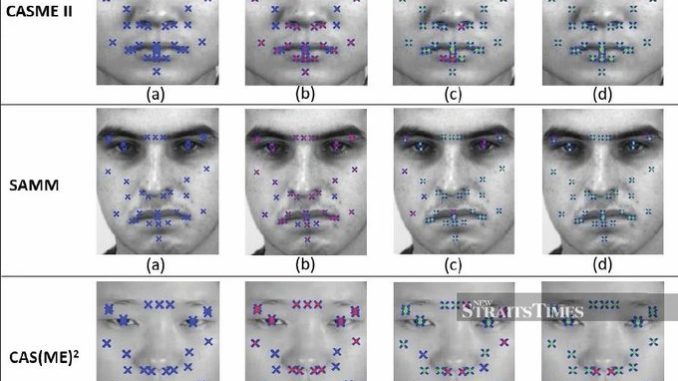

Some instances of AI-enhanced bots include Facebook, which used to have facial recognition capability on social media photos, and Adobe, which uses deep learning for dynamic photo editing.

AI AND CYBERSECURITY